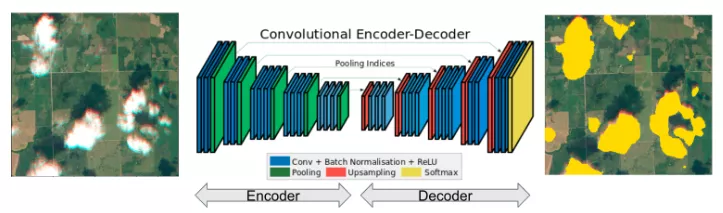

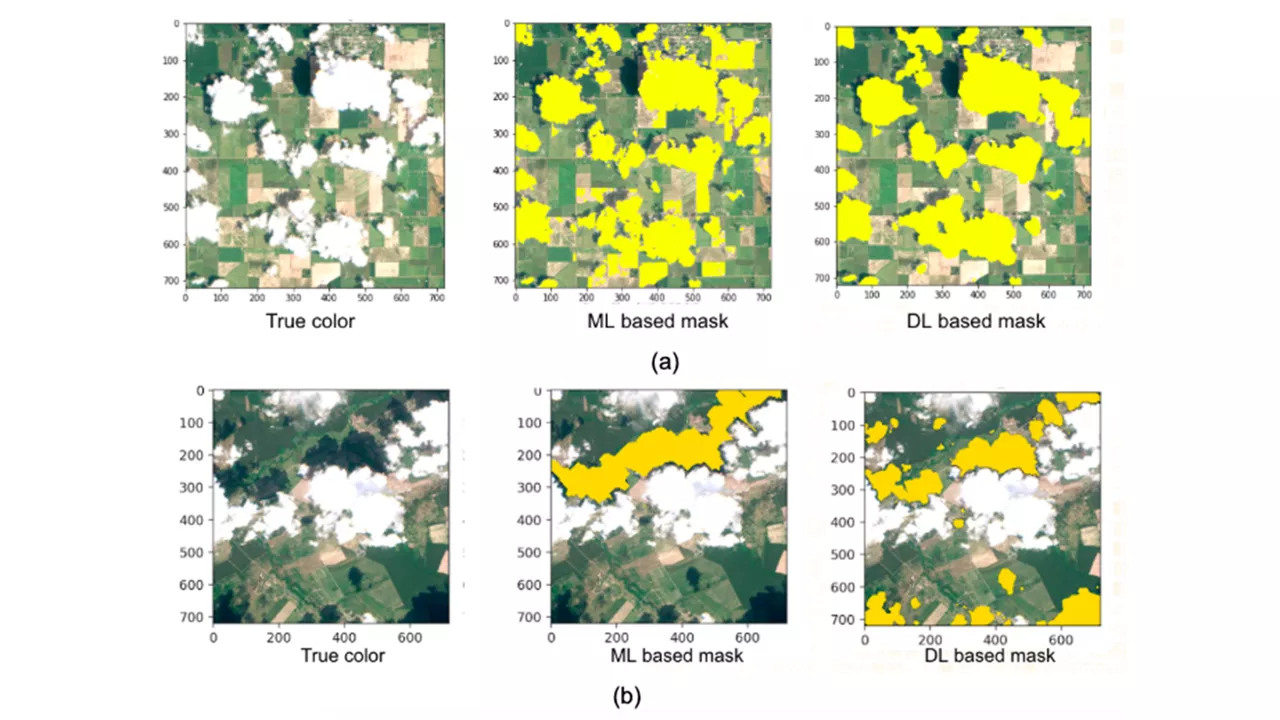

Climate consumes tens of thousands of satellite field images per day. The cloud and cloud shadow identification algorithm needs to be deployed as a part of a scalable ARD processing pipeline. We proposed a processing pipeline that could apply deep-learning models to cut up large images and stitch them back together with few image artifacts, and to scale to many image sizes.

The journey to develop cloud and cloud shadow detection solutions highlights a typical set of problems, where efficiently scaling our analysis globally will enable production implementation of data-driven algorithms. This project requires cross-domain knowledge, including data science, machine learning, remote sensing, atmospheric science, agronomy, and engineering infrastructure. At Climate we are working on many more projects which improve our customers’ experience by applying data science in agriculture.